Java Media Framework (JMF)

-

The Java Media Framework (JMF) is an application programming interface (API) for incorporating time-based

media into Java applications and applets.

-

JMF 2.0 is designed to:

-

Be easy to program

-

Support capturing media data

-

Enable the development of media

streaming and conferencing applications in Java

-

Enable advanced developers and

technology providers to implement custom solutions based on the existing

API and easily integrate new features with the existing framework

-

Provide access to raw media

data

-

Enable the development of custom,

downloadable demultiplexers,

codecs, effects processors,

multiplexers, and renderers (JMF plug-ins)

- Current version JMF 2.1.1.

- JMF contains classes that provide

support for RTP (Real-Time Transport Protocol).

-

RTP enables the transmission

and reception of real-time media streams across the network.

-

RTP can be used for media-on-demand

applications as well as interactive services such as Internet telephony.

-

Important web sites:

-

Material presented here are

adapted from JMF API guide. (2.9MB).

JMF2.1.1e Set up

-

You can download it from java.sun.com.

Select the product and choose Java media framework from the menu.

-

After installing JMF2.1.1e, the

JMF2.1 is installed in C:\Program Files\JMF2.1.1e

-

The bin directory contains the

JMStudio program. You can use JMStudio program to transmit multimedia streams

or to receive them. Just Click on the JMStudio.exe file. If

you find the JMStudio icon on the desktop, use it.

-

The lib directory contains the

.jar files which are needed to compile the JMF applets or applications. We have set up five PCs in EN149, those facing EN143, with JMF2.1.1e for you hw4 exercises.

NetBean 5.0 Setup

- To compile JMF programs using NetBearn5.0, you

need to add the JMF Jar files, jmf.jar, mediaplayer.jar, multiplayer.jar,

sound.jar, and customizer.jar in C:\Program Files\JMF2.1.1e\lib\ to NetBean Java library.

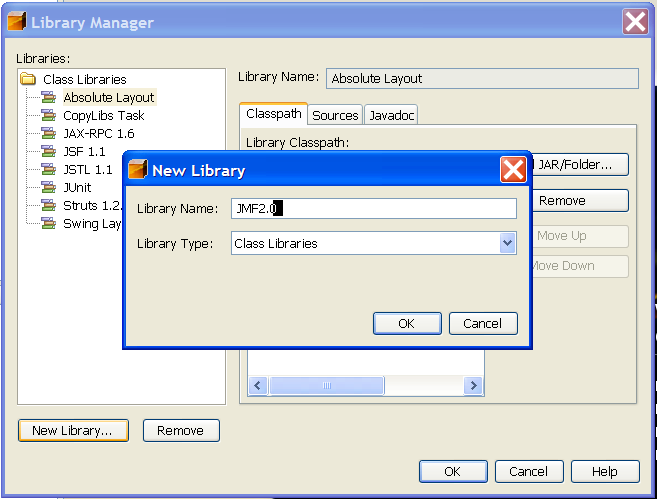

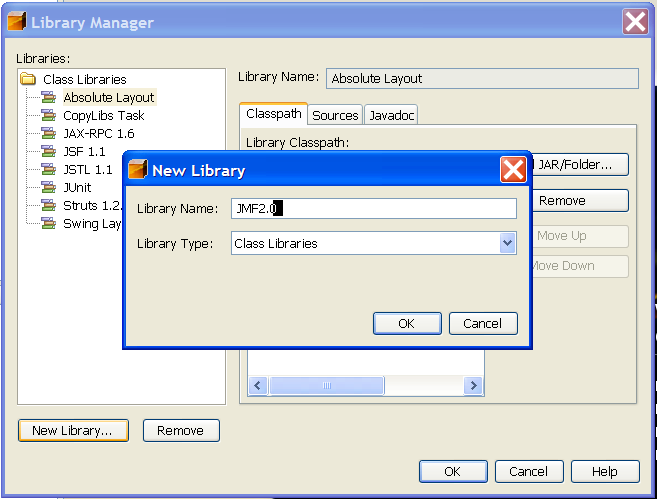

- Select Tools | Library Manager

- Under libraries panel, it lists existing libraries.

- Click "new libraries..." to add java

library.

- enter JMF2.1.1e as names,

- click OK.

- Click the "Add JAR/Folders..." button.

- The file chooser dialog window

shows up. Select all five.jar file in C:\program files\jmf2.1.1e\lib.

- You will see jmf.jar;mediaplayer.jar;multiplayer.jar;sound.jar; customizer.jar in the class path.

- Click OK to conclude

the java library selection.

- NetBean integrates with Tomcat server(version 5.5.7 for NetBean 4.1) for testing your client/server applications.

JBulder Setup (JMF2.0)

-

To compile using JBuilder, you

need to add the JMF Jar files, jmf.jar, mediaplayer.jar, multiplayer.jar,

sound.jar in C:\Program Files\JMF2.0\lib\ to JBuilder Java library by

-

Select Project | Default Properties

-

Click add button to add java

library.

-

On "select a java library to

add" window, if you find JMF already there, select them

-

Otherwise click "new" button,

-

enter JMF2.0 as names,

-

click the "..." button to the

right of the class path.

-

The "Edit class library path"

shows up. Click the "Add Archives" button.

-

The file chooser dialog window

shows up. Select each of four .jar file in C:\program files\jmf2.0\lib.

-

You will see C:\Program Files\JMF2.0\lib\jmf.jar;C:\Program

Files\JMF2.0\lib\mediaplayer.jar;C:\Program Files\JMF2.0\lib\multiplayer.jar;C:\Program

Files\JMF2.0\lib\sound.jar in the class path.

-

Click OK several times to conclude

the java library selection.

Apache Set up (This is only needed when you host the java applets and related media files)

- We will use apache to serve web page with JMF player java applet code. Create c:\Student\<login> directory for apache 2.0.55. I will use chow as <login> in the steps below.

- apache_2.0.55-win32-x86-no_ssl.msi can

be downloaded from httpd.apache.org

- When click on the apache_2.0.55-win32-x86-no_ssl.msi, it will install the apache 2.0.55 and instead of install in the default c:\program files\apache group\, let us install it in c:\Student\chow directory.

- When prompt for the Destination (installed) directory, click "change" button and select the directory to install apache 2.0.55. You may want to create

- Since we did not have privilege to install server software package on c:\program files. The apache 2.0.55 will be installed in C:\student\chow\Apache2

- When prompt for the server information, take the default choice such as EN149-29.eas.uccs.edu, change the Admin name to <loign>@uccs.edu.

-

In C:\student\chow\Apache2\bin directory, you will

find apache.exe which can be clicked to start the apache web server. You will also find conf (configuration directory), htdoc (default web pages directory), cgi-bin

(script directory), and log (log record directory) there. The conf/httpd.conf configuration file is modified to use port 8080. "Listen 8080".

- After modifying/checking the httpd.conf,

click the apache.exe to start the web server.

- Create an test.html in c:\Student\chow\Apache2\htdocs

-

You can verify the existence

of apache web server, by typing http://localhost:8080/test.html in the browser.

-

The web server can also serve

web page to any Internet web browser. You can find out the ip address

by typing the "ipconfig". Say it is 128.198.174.43. Then on the

browser of other machine, you can type in http://128.198.174.43/test.html

to test a jmftest.html web page of the web server machine.

Time-based media

-

Any data that changes meaningfully

with respect to time can be characterized as time-based media.

-

Audio clips, MIDI sequences,

movie clips, and animations are common forms of time-based media.

-

time-based media is often referred

to as streaming media -- it is delivered in

a steady stream that must be received and processed within a particular

timeframe to produce acceptable results.

-

A media

stream is the media data obtained from a local file, acquired over

the network, or captured from a camera or microphone.

-

Media streams that contain multiple

tracks are often referred to as multiplexed

or

complex

media

streams.

-

Demultiplexing is the process

of extracting individual tracks from a complex media stream.

-

Media streams can be categorized

according to how the data is delivered:

-

Pull (Client Side Pull): data transfer is initiated

and controlled from the client side. For

example, Hypertext Transfer

Protocol (HTTP) and FILE are pull

protocols.

-

Push (Server Side Push): the server initiates data

transfer and controls the flow of data. For example, Real-time Transport

Protocol (RTP) is a push protocol used for streaming media. Similarly,

the SGI MediaBase protocol is a push protocol used for video-on-demand

(VOD).

-

An output destination for media

data is sometimes referred to as a data sink.

Examples: speaker, monitor, file, network connection.

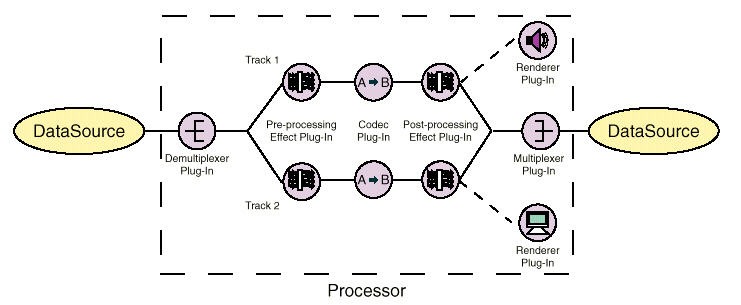

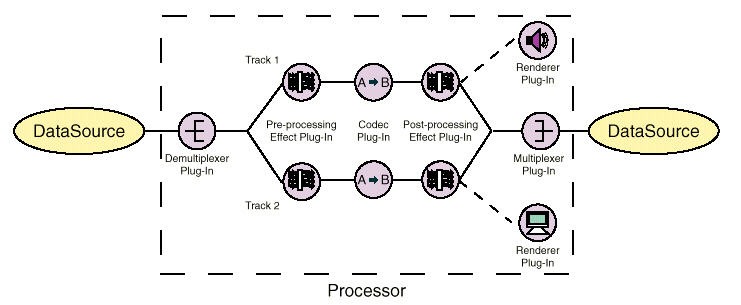

Media Processing Model

- Capture to a file

1. The audio and video tracks

would be captured.

2. Effect filters would

be applied to the raw tracks (if desired).

3. The individual tracks

would be encoded.

4. The compressed tracks

would be multiplexed into a single media

stream.

5. The multiplexed media

stream would then be saved to a file.

-

Presentation

1. If the stream is multiplexed,

the individual tracks are extracted.

2. If the individual tracks

are compressed, they are decoded.

3. If necessary, the tracks

are converted to a different format.

4. Effect filters are applied

to the decoded tracks (if desired).

-

A codec performs media-data

compression and decompression.

-

Each codec has certain input

formats that it can handle and certain output formats that it can generate.

Effect Filters

-

An effect filter modifies the

track data in some way, often to create special effects such as blur or

echo.

Effect filters are classified

as either pre-processing effects or post-processing effects, depending

on whether they are applied before or after the codec processes the track.

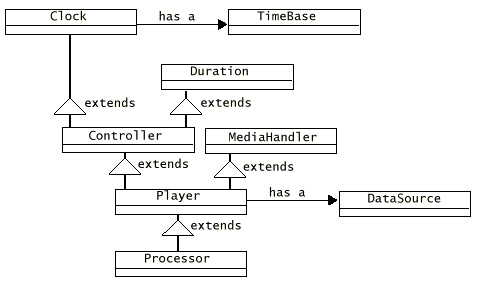

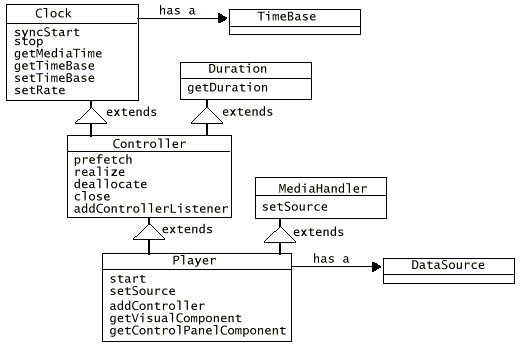

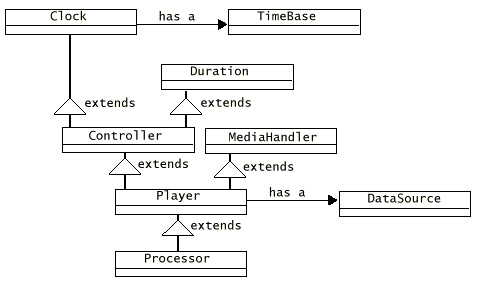

JMF High Level Architecture

-

JMF keeps time to nanosecond

precision.

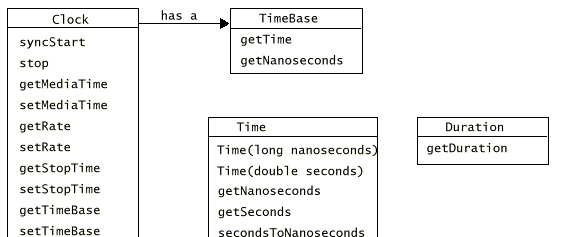

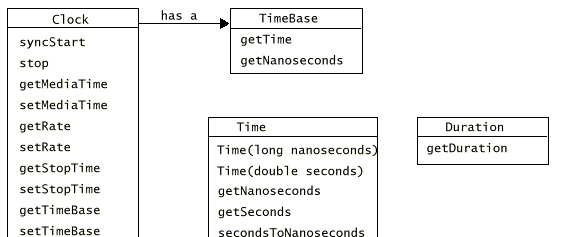

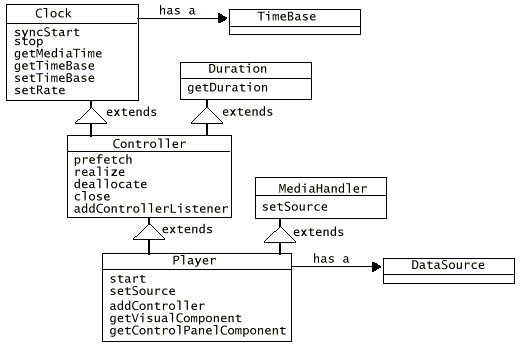

JMF Time Model

-

TimeBase provides provides a

constantly ticking time

source, much like a crystal

oscillator in a watch.

-

The time-base

time cannot be stopped or reset.

-

Time-base time is often based

on the system clock.

-

A Clock object's media

time represents the current position within a media stream.

-

the beginning of the stream

is media time zero,

-

the end of the stream is the

maximum media time for the stream.

-

The duration of the media stream

is the elapsed time from start to finish

-

Clock uses:

-

The time-base start-time: the

time that Clock's TimeBase reports when the presentation begins.

-

The media start-time: the position

in the media stream where presentation begins.

-

The playback rate: how fast

the Clock is running in relation to its TimeBase.

-

The rate is a scale factor that

is applied to the TimeBase.

-

a rate of 1.0 represents the

normal playback rate for the media stream,

-

a rate of 2.0 indicates that

the presentation will run at twice the normal rate.

-

A negative rate indicates that

the Clock is running in the opposite direction from its TimeBase:

-

for example, a negative rate

might be used to play a media stream backward.

-

MediaTime = MediaStartTime +

Rate(TimeBaseTime - TimeBaseStartTime)

-

When the presentation stops,

the media time stops, but the time-base time continues to advance.

Managers

-

JMF uses intermediary objects

called managers to integrate new implementations of key interfaces

that define the behavior and interaction of objects used to capture, process,

and present time-based media.

-

JMF uses four managers:

-

Manager:

handles the construction of Players, Processors, DataSources, and DataSinks.

-

PackageManager:

maintains a registry of packages that contain JMF classes, such as custom

Players, Processors, DataSources, and DataSinks.

-

CaptureDeviceManager:

maintains a registry of available capture devices.

-

PlugInManager:

maintains a registry of available JMF plug-in processing components, such

as Multiplexers, Demultiplexers, Codecs, Effects, and Renderers.

Write JMF programs:

-

use the Manager create methods

to construct the Players, Processors, DataSources, and DataSinks

for your application.

-

use the CaptureDeviceManager

to find out what capture devices are available and access information about

them.

-

query the Plug-InManager to

determine what plug-ins have been registered for processing the media data

-

register new plugin with the

PlugInManager to make it available to Processors that support the plug-in

API.

-

To use a custom Player, Processor,

DataSource, or DataSink with JMF, you register your unique package prefix

with the PackageManager.

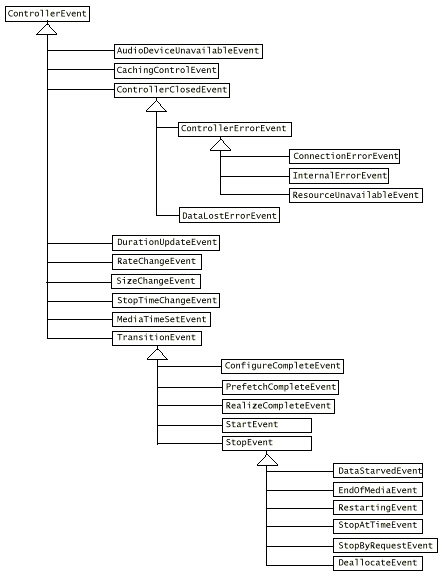

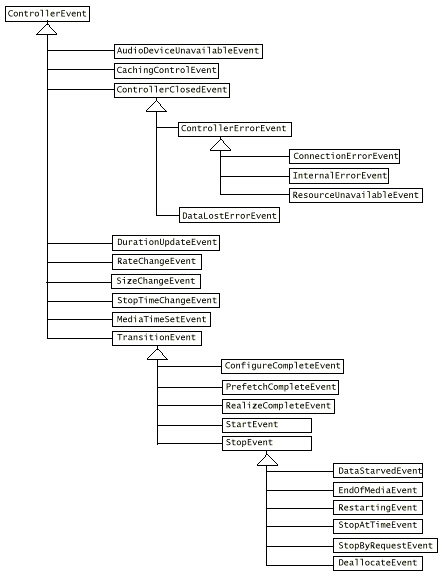

Event Model

-

JMF uses a structured event

reporting mechanism to keep JMF-based programs informed of the current

state of the media system: e.g., out of data or resource unavailable

-

MediaEvent is subclassed to

identify many particular types of events. These objects follow the established

Java Beans patterns for events.

-

Follow java event delegation

model: JMF defines a corresponding listener interface. To receive notification

when a MediaEvent is posted, you implement the appropriate listener interface

and register your

listener class with the

object that posts the event by calling its addListener method.

-

Controller objects (such as

Players and Processors) and certain Control objects such as GainControl

post media events.

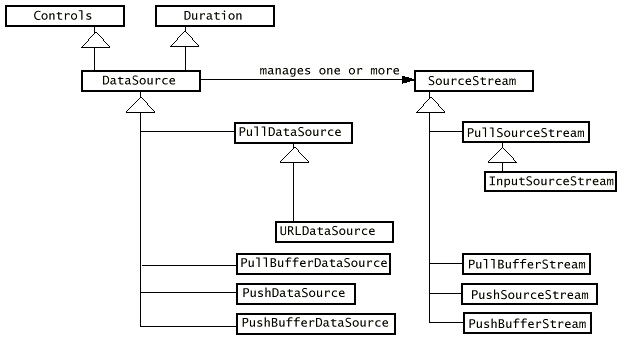

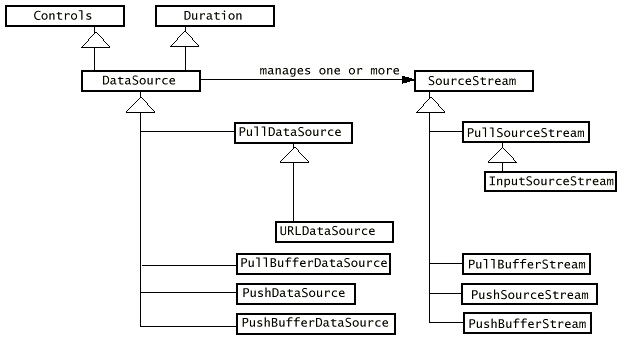

Data Model

-

JMF media players usually use

DataSources to manage the transfer ofmedia-content.

-

A DataSource encapsulates both

the location of media and the protocol and software used to deliver the

media.

-

A DataSource is identified by

either a JMF MediaLocator or a URL (universal resource locator).

-

A MediaLocator is similar to

a URL and can be constructed from a URL, but can be constructed even if

the corresponding protocol handler is not installed on the system.

-

A standard data source uses

a byte array as the unit of transfer.

-

A buffer data source uses a

Buffer object as its unit of transfer.

Specialty Data Source:

-

two types of specialty data

sources: cloneable data sources and merging data sources.

-

Cloneable data sources implement

the SourceCloneable interface, which defines one method, createClone.

-

By calling createClone, you

can create any number of clones of the DataSource that was used to construct

the

cloneable DataSource.

-

A MergingDataSource can be used

to combine the SourceStreams from several DataSources into a single DataSource.

-

To construct a MergingDataSource,

you call the Manager createMergingDataSource method and pass in an array

that contains the data sources you want to merge.

-

To be merged, all of the DataSources

must be of the type.

Data Format

-

An AudioFormat describes the

attributes specific to an audio format, such as sample rate, bits per sample,

and number of channels.

-

A VideoFormat encapsulates information

relevant to video data.

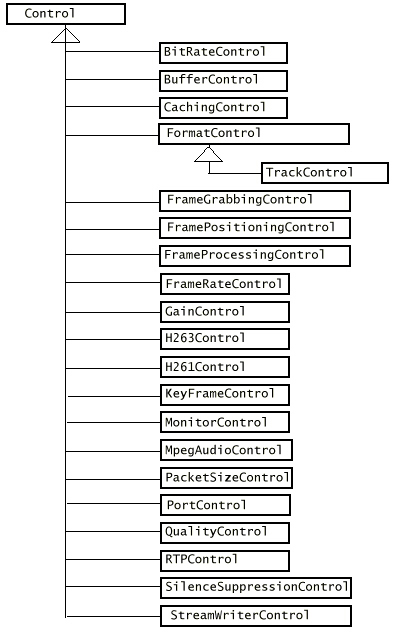

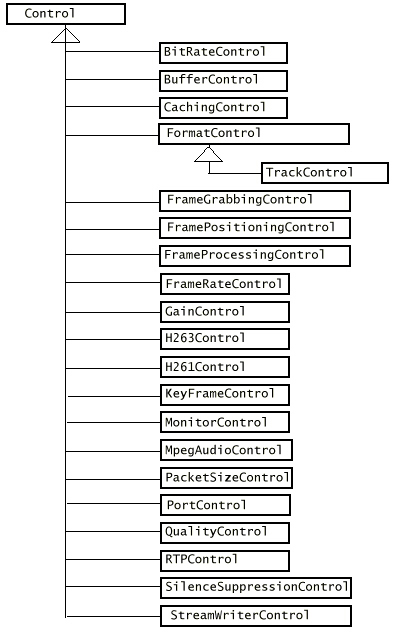

Control

-

JMF Control provides a mechanism

for setting and querying attributes of an object.

-

A Control often provides access

to a corresponding user interface component that enables user control over

an object's attributes.

-

Many JMF objects expose Controls,

including Controller objects, DataSource objects, DataSink objects, and

JMF plug-ins.

-

Any JMF object that wants to

provide access to its corresponding Control objects can implement the Controls

interface.

-

Controls defines methods for

retrieving associated Control objects.

-

DataSource and PlugIn use the

Controls interface to provide access to their Control objects.

-

CachingControl enables download

progress to be monitored and displayed. If a Player or Processor can report

its download progress, it implements this interface so that a progress

bar can be displayed to the user.

-

StreamWriterControl interface

enables the user to limit the size of the stream that is created.

Gain Control

-

GainControl enables audio volume

adjustments such as setting the level and muting the output of a Player

or Processor.

-

It also supports a listener

mechanism for volume changes.

-

FramePositioningControl enables

precise frame positioning within a Player or Processor object's media stream.

-

FrameGrabbingControl provides

a mechanism for grabbing a still video frame from the video stream. List of JMF controls:

TrackControl

-

a type of FormatControl that

provides the mechanism for controlling what processing a Processor object

performs on a particular track of media data.

-

With the TrackControl methods,

you can specify what format conversions are performed on individual tracks

and select the Effect, Codec, or Renderer plug-ins that are used by the

Processor.

-

PortControl defines methods

for controlling the output of a capture device.

-

MonitorControl enables media

data to be previewed as it is captured or encoded.

-

BufferControl enables user-level

control over the buffering done by a particular object.

User Interface Components

-

To get the default user interface

component for a particular Control, you call getControlComponent.

-

a Player provides access to

both a visual component and a control panel component -- to retrieve these

components, you call the Player methods getVisualComponent and getControlPanelComponent.

Presentation

-

In JMF, the presentation process

is modeled by the Controller interface.

-

JMF API defines two types of

Controllers: Players and Processors.

-

A Player or Processor is constructed

for a particular data source.

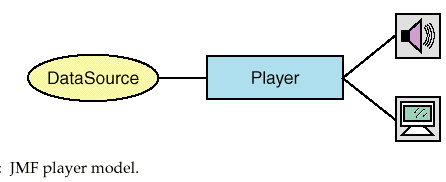

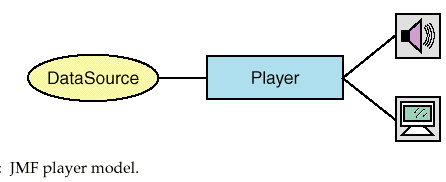

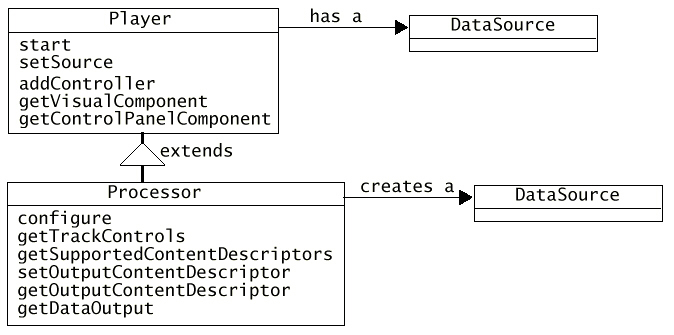

Player

-

A Player processes an input

stream of media data and renders it at a precise time.

-

A DataSource is used to deliver

the input media-stream to the Player.

-

The rendering destination depends

on the type of media being presented.

-

A Player does not provide any

control over the processing that it performs or how it renders the media

data.

(Use Processor for that

purpose)

-

Player supports standardized

user control and relaxes some of the operational restrictions imposed by

Clock and Controller.

-

-

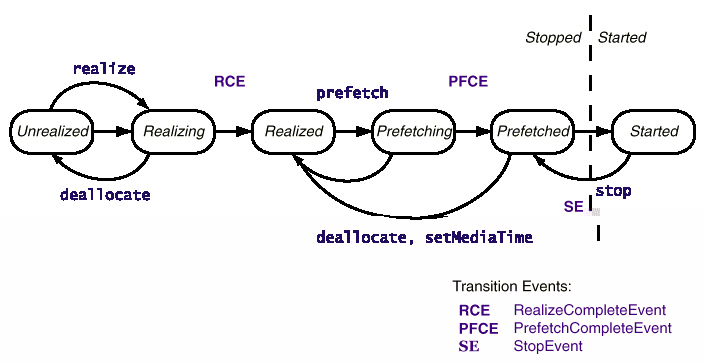

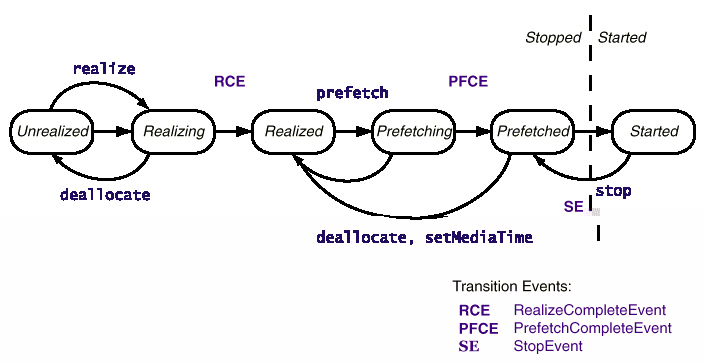

Player's States

-

A Realizing Player is in the

process of determining its resource requirements and acquire resources

(some may require exclusive use).

-

A Realizing Player is in the

process of determining its resource requirements.

-

A Prefetching Player is preparing

to present its media. During this phase, the Player preloads its media

data, obtains exclusive-use resources, and does whatever else it needs

to do to prepare itself to play.

-

Calling start puts a Player

into the Started state. A Started Player object's time-base time and media

time are mapped and its clock is running,

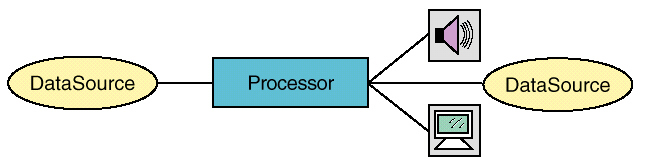

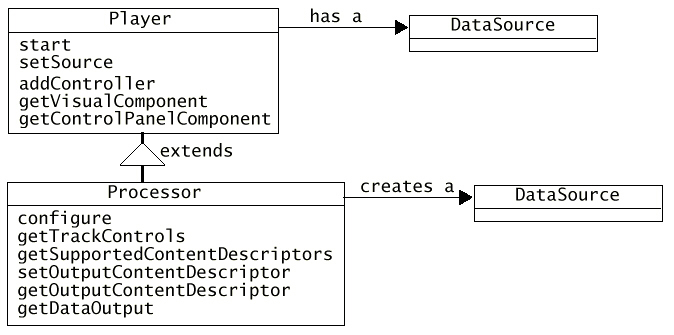

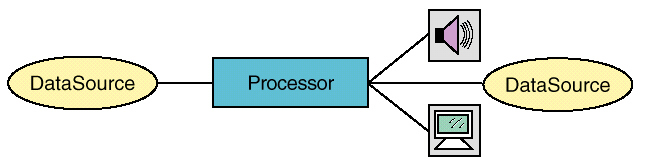

Processors

-

a specialized type of Player

that provides control over what processing is performed on the input media

stream. A Processor supports all of the same presentation controls as a

Player.

-

Can send media data output through

a DataSource.

-

Processor Stages

-

Transcoding is the process of

converting each track of media data from one input format to another.

-

The processing at each stage

is performed by a separate processing component.

-

These processing components

are JMF plug-ins.

JMF Plug-ins

-

There are five types of JMF

plug-ins:

-

Demultiplexer:

parses media streams such as WAV, MPEG or QuickTime. If the stream is multiplexed,

the separate tracks are extracted.

-

Effect:

performs special effects processing on a track of media data.

-

Codec:

performs data encoding and decoding.

-

Multiplexer:

combines multiple tracks of input data into a single interleaved output

stream and delivers the resulting stream as an output DataSource.

-

Renderer:

processes the media data in a track and delivers it to a destination such

as a screen or speaker.

Processor States

-

Two more states than players:

Configuring and Configured.

-

While the Processor is in the

Configuring state, it connects to the DataSource, demultiplexes the input

stream, and accesses information about the format of the input data.

-

Processor moves into the Configured

state when it is connected to the DataSource and data format has been determined.

-

When the Processor reaches the

Configured state, a ConfigureCompleteEvent is posted.

Processing Controls

-

Control what processing operations

the Processor performs on a track through the TrackControl for that track.

-

Call Processor getTrackControls

to get the TrackControl objects for all of the tracks in the media stream.

-

Through a TrackControl, you

can explicitly select the Effect, Codec, and Renderer plug-ins you want

to use for the track.

-

To find out what options are

available, you can query the PlugInManager to find out what plug-ins are

installed.

-

To control the transcoding that

is performed on a track by a particular Codec, you can get the Controls

associated with the track by calling the TrackControl getControls method.

This method returns the codec controls available for the track, such as

BitRateControl and QualityControl.

Data Output

-

getDataOutput method returns

a Processor object's output as a DataSource.

JMF Events

Capture

-

A multimedia capturing device

are abstracted as DataSources.

-

a device that provides timely

delivery of data can be represented as a PushDataSource.

-

Some devices deliver multiple

data streams, for example, an audio/video conferencing board might deliver

both an audio and a video stream.

Media Data Storage and Transmission

-

A DataSink is used to read media

data from a DataSource and render the media to some destination -- generally

a destination other than a presentation device.

-

A particular DataSink might

-

write data to a file,

-

write data across the network,

-

or function as an RTP broadcaster.

-

Like Players, DataSink objects

are constructed through the Manager using a DataSource?.

-

A DataSink can use a StreamWriterControl

to provide additional control over how data is written to a File.

-

DataSinkEvent subtypes:

-

DataSinkErrorEvent, which indicates

that an error occurred while theDataSink was writing data.

-

EndOfStreamEvent, which indicates

that the entire stream has successfully been written.

Exercise 1: Playing an Movie in

an Applet

-

Here is the web page, say jmftest.html,

with applet tag

<html>

<body>

This

is a test of SimplePlayer Applet.

<hr>

<applet

code="SimplePlayerApplet.class" width=320 height=300>

<param

name=file value="uccs.rm">

</applet>

</body>

</html>

-

Source

code of the Applet.

-

Compile the SimplePlayerApplet

using the NetBean. See more detailed steps in Hw4.

-

You will see com and javax directory

created in your directory. They contain the necessary classes to

serve the applet.

-

Run the

-

You can ftp the web page, the

applet class file and those two directories to your cs525 site, CS Unix machines .

Then you can access the applet at home.

-

Here is another

web page with different quicktime movie.

-

Exercise: Video Multicast over

RTP

-

There is a utility Java application

called VideoTransmit, which can be used to transmit video using JPEG over

RTP.

-

Source code of VideoTransmit.java

-

Modify the transmit time limit

from 30 seconds to 10 min by replacing

Thread.currentThread().sleep(30000);

with

Thread.currentThread().sleep(600000);

-

Make (Compile) VideoTransmit.java

application.

-

Copy darkcity.mov from H:\cs\cs525\jmf

to your f:\student\<login> directory

-

Multicast darkcity.mov with

the following command on a MS-DOS prompt window:

java

VideoTransmit file:/f:/student/<login>/darkcity.mov 239.0.0.1

3000

-

Note that here the 2nd parameter

follows that of the url syntax for the media file.

-

239.0.0.1 is a D-class

multicast IP address for local administration purpose and will not be forwarded

outside a domain.

-

3000 is the port number.

-

To receive this multicast,

you can use the JMStudio. Click on the JMStudio icon

on the desktop or click on the JMStudio.bat in c:\program files\jmf2.0\bin

on the desktop or click on the JMStudio.bat in c:\program files\jmf2.0\bin

-

You should see

-

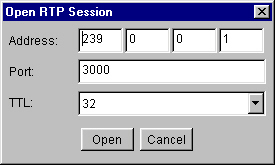

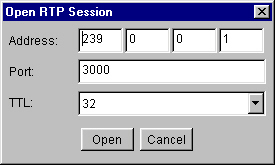

Select File | Open RTP Session.

You will see

-

Enter the Multicast IP address

239.0.0.1 and port number 3000. TTL (Time to Life) can be set to

1. Click open

-

You will see

-

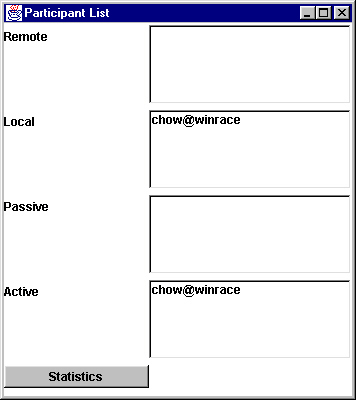

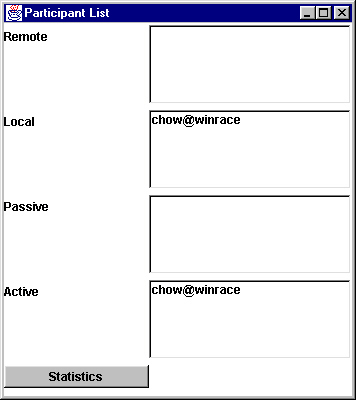

Click the Participant List.

You will see participants involved in this multicast. Active list are the

senders.

-

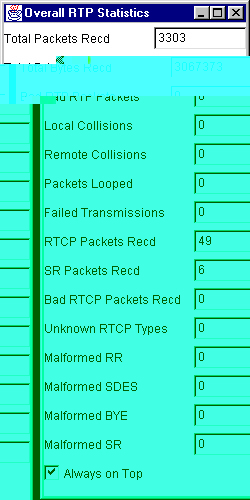

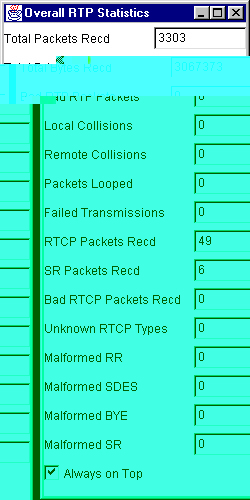

If you click the statistics,

a window will show the RTP and RTCP protocol packet statistics.

-

Here you see the total packets

and SR/RTCP packets Rcvd

-

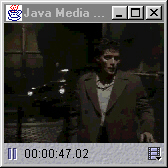

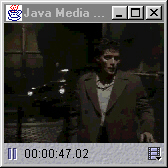

When the multicast video arrives,

you will see the video window pops up similar to

-